AI now powers contemporary website protection systems, posing new challenges to web scrapers. Spoofing IP addresses and user agents is no longer enough to avoid detection. Sophisticated systems like DataDome protection now inspect trillions of signals daily to identify bots among millions of genuine human visitors.

Scraping DataDome-protected websites is certainly possible, but it is also extremely difficult. It involves mixing multiple web scraping tools and methods:

- Mimicking human behavioral biometrics

- Solving canvas fingerprinting

- Dealing with browser fingerprinting identification

In reality, thousands of businesses, including brands like Amazon, scrape DataDome-protected websites. Unlike DDoS bots or other malicious actors, legitimate scraping requests do not have an ill intent, but typically gather publicly available data for:

- Market research

- Price comparison

- Ad verification

- Competition monitoring

If you fall into this category, we will explain how DataDome blocks work below. We will also outline tools that help bypass these blocks, but also mention why they sometimes fail due to sophisticated detection methods.

What Is DataDome?

Fabien Grenier and Benjamin Farbe founded DataDome as an online fraud prevention and bot control cybersecurity business in 2015. Its main goal is to provide website, API, and application protection. To achieve this, DataDome branches its functions into five categories:

- Bot protection

- DDoS protection

- Page protection

- Account protection

- Ad protection

In 2024, DataDome won the Cybersecurity Excellence Award for Fraud Prevention. The following year, CyberRisk Alliance SC Awards selected it as a Best Application Security Solution finalist. Both rewards carry significant weight in the cybersecurity industry, and DataDome is now a partner of numerous major brands, like Google Cloud, Amazon Web Services (AWS), and Salesforce.

DataDome's major clients also include Foot Locker, Tripadvisor, SoundCloud, Vinted, PayPal, and many others. It is on par with similar IT giants like Akamai and Cloudflare. However, the latter two are primarily Content Delivery Networks (CDNs), while DataDome emphasizes AI-powered cybersecurity solutions often used on top of CDNs.

E-commerce platforms, ticketing and payment apps, travelling, and hospitality services often subscribe to DataDome's protection. It safeguards their user accounts against credential stuffing, protects pages against cross-site scripting (and other) cyberattacks, protects ads against click fraud, and, yes, blocks web scraping.

Please note that bypassing DataDome at scale is designed to be extremely difficult. Think of it that way. If Baron Harkonnen chose a SaaS to protect its spice shipments, it would likely be DataDome.

How DataDome Detects and Blocks Bots

AI powers DataDome cybersecurity systems, but it also uses a physical network of 35 Points of Presence (PoP) to protect its clients from bot traffic. PoPs are smaller hubs placed in major worldwide areas, such as densely populated cities.

Similarly to a Web Application Firewall (WAF), DataDome also protects servers from unauthorized access and malicious traffic. However, firewalls inspect incoming traffic once it reaches their network, so the attack already uses the network's resources.

Points of presence

Instead, DataDome uses one of its 35 PoPs to inspect the traffic. Suppose a hacker is targeting a website in Chicago from London. A traditional firewall would intercept and stop the attack once it reaches the server network in Chicago.

DataDome uses its PoP in London (or the nearest location) so that the attack never gets close to its client's origin server. This way, it provides its main selling point, called Zero Latency protection. It protects its clients from origin server resource exhaustion so that all other visitors enjoy a safe and uninterrupted service.

Also, because traffic inspection happens on the same network that a cyber threat was spotted, it is much faster. Because it doesn't have to wait for the traffic to reach the targeted network's firewall, DataDome identifies bots in under 2 milliseconds, which is a leading industry position speedwise.

Request analysis

DataDome analyzes all requests in real time. It uses a machine-learning algorithm-powered multi-layered protection that compares hundreds of information bits to uncover any discrepancies in a selected profile. Keep in mind that everything happens on PoPs in under 2 milliseconds.

Firstly, DataDome analyzes the digital handshake, which is when two computers establish a connection. One part is doing a TLS/SSL handshake where both sides exchange encryption rules, session keys, and other elements required for secure two-way communication.

DataDome intercepts and analyzes this handshake, called TLS Fingerprinting. Web scrapers, headless browsers, and tools like Puppeteer and Selenium typically have unique signatures. If you are spoofing a Firefox browser, but it has a different TLS signature, you will most likely get a DataDome block.

Behavioral analysis

Behavioral biometrics analysis is a field that leaped forward alongside AI development. It also proved crucial in cybersecurity, as machine algorithms behave significantly differently compared to humans. DataDome uses behavioral analysis to monitor user interactions such as mouse movements and clicks.

That's why it's essential to mimic human browsing behavior when web scraping. For example, simulating mouse cursor trajectories and clicks helps your scraper avoid detection. The same applies to the clicking rate, which should be human-like and not too fast. Otherwise, your scraper will be confused for a malicious bot.

DataDome proceeds with checking hardware behavior. It uses canvas fingerprinting that renders a hidden element and marks its fingerprint. Because GPUs render elements slightly differently, it can tell when there's a discrepancy in the declared operating system and the rendering results.

This is not an exhaustive list of DataDome checkups, as the complete list encompasses over a thousand unique data points. In the end, DataDome calculates a trust score for every visitor based on their behavior and system characteristics. Take a look at the table below for a clear workflow.

Allow access

A genuine user shopping online

Block access

An identified malicious bot, a request that fails TLS fingerprint

Issue a challenge

A user gets a DataDome CAPTCHA or JavaScript challenge

Although the underlying technology is highly complex, the results are straightforward and actionable.

DataDome uses CAPTCHA challenges to verify if the user is human when it suspects bot activity. If you pass DataDome CAPTCHA, you are allowed access, and you will get errors like 403 Access Denied.

Most Effective Methods to Bypass DataDome

DataDome is an extremely sophisticated and efficient anti-bot and anti-scraping service used by websites to block non-human visitors. But there are ways to bypass DataDome if you're ready for some heavy lifting and mixing more than a few methods.

Residential and Mobile Proxies

In 2026, proxy rotation for web scraping is no longer an option, but a necessity. DataDome uses IP tracking alongside other detection methods, so if you send hundreds of scraping requests from the same IP address, you will definitely get blocked.

We recommend opting for mobile or residential proxies as they have the best IP trust scores. Mobile IPs are particularly hard to block because mobile carriers assign the same IP to thousands of users simultaneously. Services like DataDome are cautious about banning mobile IPs, because it may negatively affect unrelated users.

This is also the most expensive proxy type. Alternatively, internet service providers (ISPs) issue IP addresses to genuine residents, which you can use as high-trust-score residential proxies. If you implement proxy rotation, your web scraping tool will use different IPs to scrape DataDome-protected websites with reduced detection and ban chances.

Browser Fingerprint Spoofing and TLS/HTTP2 Emulation

Even if you rotate dozens of high-anonymity residential proxies, DataDome can still detect your scrapers. Your next step should be to take care of browser fingerprinting. This surveillance method inspects browser specifics:

- Fonts

- Plugins

- Resolution

- Operating system

- Timezone

- Cookies and many more.

With proxies, you are sending scraping requests from different IPs, but DataDome sees that it comes from the same device, inspecting other metrics. Rotating user agents alongside proxies is one solution. Also, consider using anti-detect browsers that allow creating hundreds of online profiles isolated from each other.

If you still run into trouble, you may need HTTP/2 and HTTP/3 emulation tools. Modern websites use these faster and more reliable protocols, but many scraping tools still utilize HTTP/1.1. If you spoof an iPhone (HTTP/3 protocol) to scrape the web but your requests arrive using the HTTP/1.1 protocol, then DataDome will mark your IP address as suspicious or even block it.

Stealth and Headless Browsers (Playwright, Puppeteer)

If you're scraping a heavily protected website, using tools like Selenium, Puppeteer, or Playwright can help automate browser actions to bypass DataDome. But even these browser automation tools aren't sufficient to bypass DataDome on their own.

In fact, standard headless browsers like Selenium are easily detected by DataDome, leading scrapers to use fortified versions. Consider using the Stealth plugin to add another layer of online anonymity.

It patches several vulnerabilities, such as plugin and language spoofing, WebGL rendering, and hides the navigator.webdriver property, which gives out you are using browser automation. Using tools like Selenium Stealth, Puppeteer Extra Plugin Stealth, and Playwright Stealth can help in advanced fingerprinting solutions.

Automated CAPTCHA-Solving

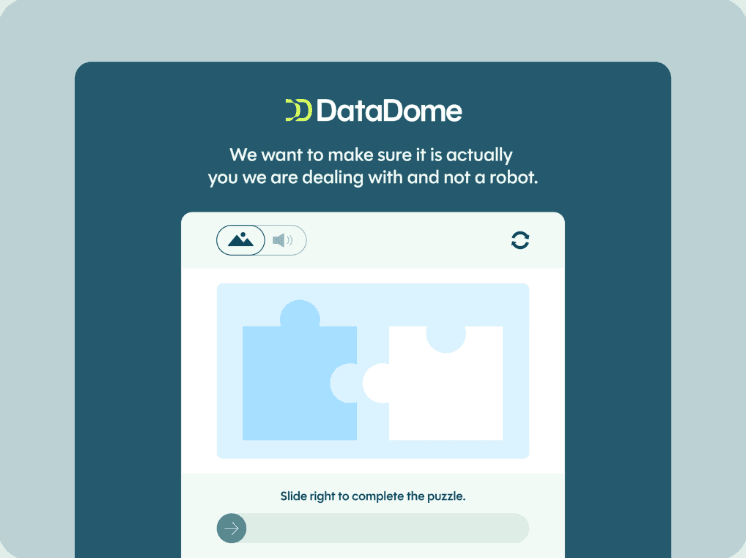

In many cases, DataDome issues a CAPTCHA if something in the user profile doesn't add up. This is how a typical DataDome CAPTCHA looks:

The slider challenge illustrated above is particularly widespread. It monitors the mouse speed and movement for human behavior patterns. Most bots will move the slider in a perfectly straight line at the same speed, which is a red flag for DataDome.

Using CAPTCHA-solving services can help manage challenges posed by DataDome during web scraping. Contemporary CAPTCHA bypassers use machine-learning-based OCR tools to solve CAPTCHAs triggered by DataDome. They mimic human browsing behavior and generate a puzzle-solving cookie, which you can add to your scraper and proceed with other tasks.

Have you encountered more types of DataDome CAPTCHA challenges? We invite you to share them with our Discord community and discuss effective solutions.

Scraping APIs

As you can see, there's a lot to take care of to bypass DataDome. It involves rotating user agents, switching between residential or mobile proxies, using headless browsers, or CAPTCHA-solving services. And even that does not guarantee access to DataDome-protected resources.

Another solution is scraping APIs. Using a web scraping API can help manage request headers, proxy rotation, and CAPTCHA solving to evade DataDome in one go.

Web scraping APIs use high-quality proxies by default to bypass anti-scraping protection. Also, web scraping APIs can handle request header management, premium proxy rotation, and advanced fingerprinting evasion to evade firewalls like DataDome. Also, if the request fails, the scraping API will retry it using different metrics to increase success rates.

Why DataDome Bypass Methods Often Fail

DataDome is a tough nut to crack, and the method you used in 2025 may not be viable in 2026. Over the last few years, it has moved from a relatively straightforward rule-based detection to an AI-powered multi-layered protection service.

DataDome processes five trillion signals daily, which dramatically improves its bot detection capability. Visitor profile inconsistencies are the main DataDome block reason, as it is able to analyze thousands of interconnected data bits to notice them.

What's more, DataDome continuously comes up with more anti-scraping challenges. The WASM (WebAssembly) challenge is one of the latest anti-bot protection tools. It forces visitors' devices to solve complex math problems and inspects the results.

Because different CPUs have different problem-solving patterns, DataDome can fingerprint them and look for inaccuracies. For example, you may be spoofing an eight-core macOS device, but the CPU solution comes from a dual-core Windows device, triggering DataDome protection.

Keep in mind that this is very taxing on device resources. If you're doing large-scale scraping, your device may be forced into solving hundreds of complex WASM challenges simultaneously, significantly slowing it down. Also, if DataDome notices you are solving the challenge too slowly, it will lower your trust score.

Best Practices for Developers

Scraping DataDome-protected sites is extremely difficult. Mimicking human behavior is essential to avoid detection by DataDome, as bots typically exhibit static behavior patterns. But that's not all.

You must also ensure lawful and responsible data gathering. Legal considerations in web scraping include understanding the target website’s terms of service and the potential for legal action if scraping violates those terms.

For example, if you're collecting personally identifiable data (PII), you must adhere to international rules like the General Data Protection Regulation (GDPR) in the EU. Here are the best practices that developers should follow to bypass DataDome.

Combine Multiple Methods

Because DataDome analyzes hundreds of interrelated information bits, you must secure them all to bypass it. If you are spoofing an Android, you must ensure you send correct resolution, operating system, and TLS handshake details, to name just a few metrics.

You must use mobile or residential proxies, but also align the timezone and geolocation settings to the proxy IP address. Even then, sophisticated DataDome algorithms may display CAPTCHA, so you may also require an efficient CAPTCHA solver, especially if you're scraping on a large scale.

Simulate Real Users

AI-powered behavioural biometrics analysis is highly efficient. Businesses like ClearView AI demonstrated how accurately AI detects movement patterns, facial expressions, and, generally, anything that the human body does.

DataDome does exactly the same in the virtual space. It checks for mouse movement patterns, clicking and browsing speed, and compares each visitor to thousands of others to verify their authenticity. In the majority of cases, you will have to rely on headless browsers that are capable of mimicking a real person's behaviour online, such as implementing a delay function for form filling and clicking.

Handle Failures

DataDome assigns a trust score to each visitor based on their behavior and characteristics to determine if they are human or a bot. Based on the results, it may display a different error code.

For example, a moderately suspicious visitor profile may only trigger a simple JavaScript challenge. More significant inaccuracies may result in an interactive CAPTCHA, and a zero-trust score profile will be blocked.

Your scraper must know how to handle all cases. It may try solving the JavaScript challenge and retry scraping in the first case scenario. But if it sees an error 403, it should switch to a different profile before attempting to gather the data again.

Monitor for Changes

Bypassing DataDome is a cat-and-mouse game. The cybersecurity professionals at DataDome are quick to come up with new anti-scraping methods. Meanwhile, web scraping professionals and enthusiasts find workarounds until they are also blocked, demanding new solutions.

You must regularly check what works and what doesn't to avoid wasting resources on outdated DataDome bypass methods. For example, in 2025, DataDome started focusing on an intent-based AI detection model.

It now separates legitimate scrapers for LLM models from malicious ones trying to obtain copyrighted or otherwise restricted data. You must now also ensure your web scraping adheres to the website's rules and international data protection standards to minimize DataDome restriction risks.

Respect the Terms of Service and the Law

We leave the most essential practice tip last. Personal data protection is one of the main pillars of modern internet structure, and unethical scraping threatens it. Here are five tips to remain on the safe and legal side.

- Ethical data collection practices involve respecting website terms of service and adhering to data privacy regulations.

- Web scraping publicly available data is generally legal as long as it does not cause damage to the website.

- Gathering personally identifiable data must adhere to local and international data protection standards.

- Never scrape copyrighted or otherwise restricted information.

- If the website or application provides one, use its official API for mutually consensual data exchange before scraping it.

Conclusion

DataDome analyzes individual user behavior, geolocation, network, and fingerprints using machine learning algorithms. Its reliance on artificial intelligence and a wide range of bot detection tools successfully blocks many scrapers and scraping methods.

But keep in mind that it is not DataDome's goal to block all data collection attempts. If your scraper signals human-like behavior patterns and does not harm the website in any way, you are more likely to succeed.

It is just as important to remain on the legal side of scraping. By respecting the website's ToS, robot.txt file, and national and international data privacy laws, you will avoid unnecessary and sometimes very risky situations.

Can Datadome bypasses work reliably at scale?

Yes, you can bypass DataDome at scale, but it is also very challenging. You will have to mix several methods, such as proxy rotation, headless browsers, simulate human-like behavior, and resolve errors.

How can I tell if a website is protected by Datadome?

The best way to verify whether a website is using DataDome protection is by checking the DataDome cookie in your browser's local storage.

In most browsers, you can open the developer tools by pressing F12, locate the Application tab, and find the Cookies section. If you find a cookie named datadome, then it protects the website you're trying to scrape.

Is it possible to bypass Datadome without using a headless browser?

Bypassing DataDome without headless browsers is possible if you are highly versed in web scraping. You will have to solve TLS fingerprinting using additional Python libraries and generate functional datadome cookies.

Alternatively, you can rely on third-party API services. In reality, this possibility is somewhat theoretical, considering the ever-evolving DataDome protection methods.

Does solving the CAPTCHA mean I’ve fully bypassed Datadome?

No, solving DataDome CAPTCHA is only one step towards securing a good trust score. It will continue monitoring your behavior on the website.

If it identifies any bot-like behavior, it will immediately lower the trust score. You may face more CAPTCHAs or even lose access via IP address block.

Is it legal to bypass Datadome, or could I get in trouble?

That depends. In most cases, ethically scraping publicly available online data is perfectly legal, as long as it does not harm the website in any way. However, if you violate the website's ToS, robot.txt file, or data protection laws like GDPR, you may face civil litigation or an expensive lawsuit.